What are published effect sizes and study power in recent cognitive neuroscience and psychology?

Given the ongoing debate on the lack of reproducibility in many scientific fields, Szucs and Ioannidis (2017) analysed published records in cognitive neuroscience and experimental psychology journals to determine publication practices in these fields.

The investigators used a text-mining approach to extract 26,841 records of degrees of freedom and t-values from 3,801 papers published between Jan 2011 to Aug 2015 in 18 journals. These data allowed the investigators to determine the distribution of published effect sizes, study power, and how frequently statistically significant findings are false.

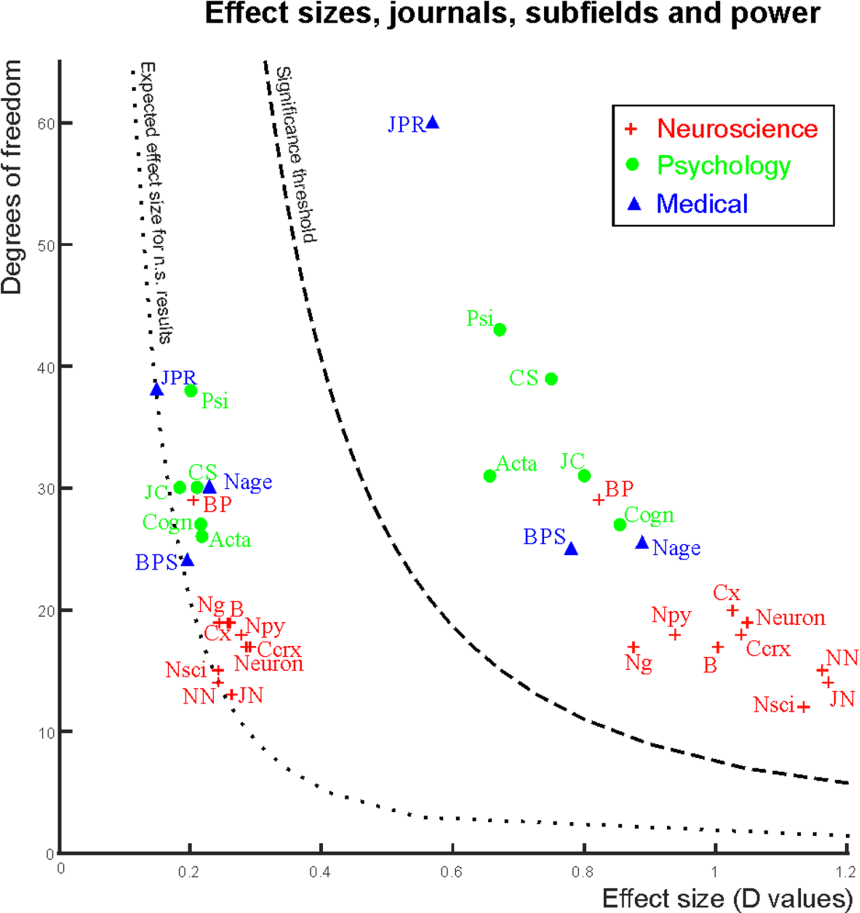

What did they find? Figure 1 shows the median effect sizes and degrees of freedom for each journal analysed. Journal markings on the left of the significance threshold show medians for statistically non-significant records, while journal markings on the right of the significance threshold show medians for statistically significant records. Cognitive neuroscience journals report the largest effect sizes but at the same time, have the smallest degrees of freedom. This means they also have the lowest study power to detect effects (assuming that effect sizes are true), and are most susceptible to exaggeration of effect sizes.

Figure 1: Median effect sizes and degrees of freedom. Journal abbreviations: Neuroscience: Nature Neuroscience (NN), Neuron, Brain (B), The Journal of Neuroscience (JN), Cerebral Cortex (Ccrx), NeuroImage (Ng), Cortex (Cx), Biological Psychology (BP), Neuropsychologia (NPy), Neuroscience (NSci). Psychology: Psychological Science (Psi), Cognitive Science (CS), Cognition (Cogn), Acta Psychologica (Acta), Journal of Experimental Child Psychology (JC). Medically oriented journals: Biological Psychiatry (BPS), Journal of Psychiatric Research (JPR), Neurobiology of Ageing (Nage). (Figure 4 in paper).

The overall median power to detect small, medium, and large effects was 0.12, 0.44, and 0.73 respectively; this is because sample sizes remained small. In addition, journal impact factors negatively correlated with statistical power.

Given these findings, the investigators conclude that the recently reported low replication success in psychology is realistic, and worse replication is expected for cognitive neuroscience. They recommend different research practices to resolve the lack of reproducibility, such as:

- pre-registration of study objectives

- compulsory pre-study power calculations

- enforcing minimally required study power

- raising the statistical significance threshold to p < 0.001 if null hypothesis significance testing is used

- publishing negative findings when sutdy design and power levels justify this and

- using Bayesian analysis to provide probabilities for both the null and alternative hypotheses.

Reference

Szucs D and Ioannidis JPA (2017) Empirical assessment of published effect sizes and power in the recent cognitive neuroscience and psychology literature. PLoS Biol 15(3):e2000797.